SUBJECT: AI Training Law and EU AI Act - What Teams Must Know

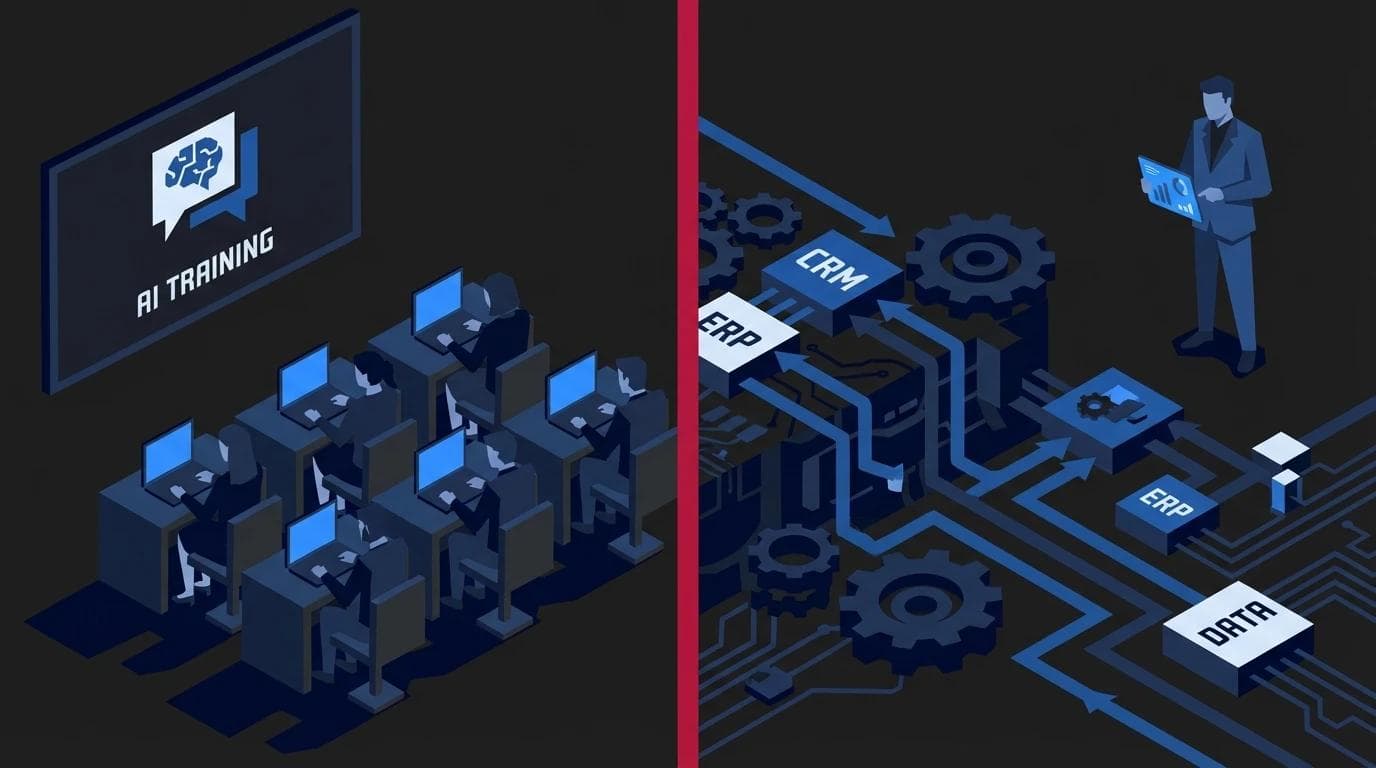

AI training law and what every employee needs to know about the EU AI Act

> What is the AI Act and how does EU law affect daily AI use at work?

The AI Act is the world's first comprehensive regulation governing the artificial intelligence market, introducing a clear legal framework for the safe and ethical use of algorithms in business. EU law requires organizations to classify tools according to their risk level, forcing teams to operate company data consciously and responsibly. In practice, this means that every AI implementation in a company must be preceded by a compliance audit to avoid sanctions for creating threats to users. Ignoring these regulations exposes enterprises to financial penalties reaching up to 35 million EUR, which puts AI security in a company on par with the requirements known from the GDPR regulation.

Boards can no longer pretend they do not see the phenomenon of Shadow AI - a situation where employees independently enter sensitive data into public content or code generators. New regulations shift full responsibility onto companies for which algorithms they use for commercial purposes. Professional AI training for business thus becomes an essential element of mitigating legal and financial risks.

The AI Act introduces a rigorous categorization of systems, which directly determines how process automation can be designed within organizational structures:

- High-risk systems - include solutions affecting recruitment, creditworthiness assessment, or health, requiring full technical documentation and human oversight.

- Limited-risk systems - chatbot-type tools (e.g., ChatGPT), which must clearly inform the user that they are interacting with artificial intelligence.

- Minimal-risk systems - simple spam filters or algorithms in games that do not require additional audit procedures.

For management, it is crucial to understand that every team member should undergo substantive AI legal training to distinguish safe tools from those that may violate intellectual property. A correctly formulated digitalization strategy must now include an audit of held licenses and the method of training the AI models on which the company's internal systems are based.

Implementing AI Act principles is not only protection against penalties but, above all, building a market advantage based on the ethical use of technology. Transparency in using algorithms builds customer trust, especially when advanced dedicated applications powered by company databases are implemented. Understanding EU law is the foundation upon which a modern, safe, and responsible digital transformation is built.

> Key takeaways for teams using AI tools

Understanding legal regulations is not just a matter of avoiding penalties, but above all, building a lasting market advantage based on trust and transparency. For modern operational teams, the most important conclusion is that ignorance is no excuse - every interaction with an algorithm, from a simple query to advanced analytics, carries specific legal consequences.

- Risk classification and prohibited practices - the EU AI Act introduces a rigid framework for high-risk systems. One must be aware that using a free bot to evaluate a candidate's CV enters the high-risk sphere, which requires the company to implement rigorous control mechanisms. Uncontrolled AI security in a company can lead to personal data protection violations, for which the organization bears full responsibility.

- Transparency obligation and content labeling - every employee must know when material created for a client comes from a machine and requires appropriate labeling. According to the regulations, AI-generated content must be identifiable so that the recipient is not misled. This is a fundamental element of ethics explained by substantive AI training for business, emphasizing safe external communication.

- Infrastructure security and code ownership - implementing local, closed systems on one's own server drastically reduces legal burdens for the company. Unlike public tools, where data can be used to train models, dedicated applications guarantee full information isolation. When choosing ChatGPT training for business, it is worth focusing on Enterprise solutions that protect intellectual property from unauthorized use.

- Education as a prevention tool - the AI Act imposes an obligation on entrepreneurs to ensure an appropriate level of knowledge for employees operating AI systems. Regular training is not only a productivity boost but, above all, a shield protecting against legal errors. Comprehensive AI training for companies allows teams to understand algorithm mechanisms, which is essential for the safe implementation of process automation in daily work.

> System classification according to the AI Act - which tools are safe in the office?

The European Artificial Intelligence Regulation (AI Act) introduces a hierarchical security structure, dividing technology into four risk groups. In office practice, most popular tools, such as chatbots for writing emails or code assistants, fall into the minimal or limited risk category, which mainly requires informational transparency from companies. In contrast, systems affecting key aspects of life, such as credit scoring or automatic CV selection in recruitment, are considered high-risk solutions (High Risk) and are subject to rigorous oversight and documentation requirements.

Understanding this pyramid is essential so that AI security in a company does not become a dead letter but a real element of operational strategy. The AI Act distinguishes the following levels:

- Unacceptable risk - systems completely banned in the European Union, such as subliminal techniques manipulating behavior or social scoring conducted by governments.

- High risk (High Risk) - this is where systems for managing critical infrastructure, education, as well as HR tools for initial candidate selection and credit scoring end up. If your organization plans to implement dedicated applications in these areas, you must provide robust technical documentation, high data quality, and constant human oversight.

- Limited risk - applies to tools such as chatbots (e.g., ChatGPT in standard applications) or image generators. Their implementation mainly involves the obligation to inform the user that they are interacting with an algorithm.

- Minimal risk - includes most spam filters in email, video games, or simple auxiliary scripts. These solutions do not require additional legal actions from the entrepreneur.

For managers, the key is to distinguish between simple support in daily tasks and the automation of decisions with a high social impact. While general AI training for companies focuses on productivity growth, specialized AI legal training must explain the differences between free versions and enterprise class solutions. 01tech experts emphasize that when implementing process automation, one should always verify whether a given data flow does not classify as a high-risk system. By choosing substantive AI training for business, your team will gain the competencies necessary to build a safe and compliant market advantage.

> Why AI legal training is essential for every employee?

Widespread access to language models has made every office computer a potential starting point for sensitive company data. Knowledge of regulations is today the foundation upon which AI security in a company is based, as the EU AI Act imposes specific obligations on organizations regarding transparency and ethics. This is not solely the domain of the legal department - your company is only as safe as its least informed employee. If someone from the marketing department generates an image using AI and publishes it as an "authentic photo" of your product without an appropriate annotation, they expose the entire organization to a severe loss of image and legal consequences resulting from misleading consumers.

Education in law is primarily about protecting intellectual property and trade secrets. Employees must understand that uncontrolled pasting of source code fragments, confidential financial reports, or sales strategies into public chatbots can result in their permanent leak into the training sets of technology providers. By choosing substantive AI training for business, you gain certainty that the team will instinctively know where the boundary of safe experimentation lies. A legally aware employee can distinguish tools with acceptable risk from those that may lead to a violation of GDPR or third-party copyrights.

Practical AI training for companies focuses on developing internal procedures (so-called AI Policy) that regulate daily work. They cover key aspects such as:

- Copyright verification - understanding who owns the content generated by algorithms and how to safely commercialize it.

- Shadow AI risk management - eliminating the habit of using private accounts in AI tools for professional tasks.

- Marking synthetic content - fulfilling informational obligations resulting from the AI Act in communication with the client.

- Personal data hygiene - techniques for anonymizing queries sent to external models.

Investment in legal competencies is not a cost, but a protective shield. Instead of blocking innovation with fear of penalties, we teach teams how to use modern solutions responsibly. Thanks to this, process automation implemented in your organization does not become a time bomb but a stable tool for building market advantage.

> Transparency obligation - when must you inform that content was created by artificial intelligence?

Transparency in the use of artificial intelligence has ceased to be merely a matter of ethics and has become a hard legal requirement. According to the EU AI Act, users of AI systems must be informed about the fact of interacting with an algorithm, especially in the case of deepfakes, generating texts affecting public opinion, or photorealistic images. The main principles of AI legal training emphasize that the recipient must not be misled as to the origin of the content. The golden rule in B2B business is simple - do not deceive your partner or client. Building professional process automation, we must ensure that every interaction with a machine is clearly signaled. If, for example, we launch an advanced support bot for a client that operates on internal company procedures, the user must initially receive a message: "You are talking to a virtual assistant, do you want to switch to an operator?". Such transparency builds trust and avoids recipient frustration, which is crucial when analyzing how to measure ROI from AI training. The obligation to mark also applies to audiovisual materials. If your company generates marketing graphics using Midjourney or voice clones for podcasts, the law requires such content to have metadata or markings visible to the human eye (e.g., a watermark or the note "AI-generated"). Lack of such markings exposes the organization to the risk of shadow AI, which we write more about when discussing AI security in a company. Understanding these intricacies is essential for every operational employee and manager. Therefore, professional AI training for business devotes a significant part of the time to discussing practical legal and ethical aspects. The most important informational obligations include:

- Interaction with bots - the user must know they are talking to a machine, not a human.

- Deepfakes and voice clones - the necessity of clearly informing that the image or sound has been digitally manipulated.

- Informational texts - if AI generates content regarding public affairs or important consumer decisions, information about algorithmic assistance is required.

> How to implement AI Act rules in a company without blocking innovation?

Implementing the EU Artificial Intelligence Regulation (AI Act) in an organization does not have to mean bureaucratic paralysis or halting work on new solutions. The most effective strategy is to move away from reflexive prohibitions in favor of building a safe technical infrastructure and clear legal frameworks. Companies that want to maintain growth dynamics should focus on creating an Internal AI Policy, conducting a tool audit, and regularly educating management and operational staff. The foundation is understanding that AI data security in a company is not a cost but an investment protecting the enterprise's intellectual capital.

The key to success is not blocking progress, but taking control over so-called Shadow AI - the unauthorized use of free tools by employees. After conducting an audit, it is worth implementing safe communication channels with language models in the company. As engineers, we recommend providing teams with hard tools, such as sandboxes on their own server, where employees can operate legally on company data without the risk of their leak to public training sets. In such a model, blocking access to public, free chats becomes a natural hygienic step, not a limitation of creativity. Often the optimal solution is dedicated applications, which we design to meet regulatory requirements at the code architecture level.

Practical steps for managers include:

- Audit of used tools - identifying all processes in which the team uses AI and assessing their risk according to the AI Act classification.

- Implementation of safe infrastructure - replacing public versions of ChatGPT with Enterprise or API solutions that guarantee data privacy.

- Competency training - regular AI training for managers allows understanding the difference between safe and risky technology implementation.

- Automation of compliance processes - using intelligent scripts to monitor how AI processes data within the organization.

Many companies fear that legal requirements will slow down their operations. Meanwhile, well-thought-out AI training for business shows that understanding AI Act principles allows for faster design of stable systems. Instead of building on uncertain ground, the organization creates process automation that is resistant to changes in regulations. It is also worth checking available funding options for such actions - our AI training funding guide will be helpful, facilitating the acquisition of funds for digital transformation compliant with the new law. A comprehensive approach, combining education with safe technology, is the only way to utilize the full potential of artificial intelligence without exposing the company to the high financial penalties provided for in the regulation.

> FAQ - most common questions about AI legal training and EU regulations

The AI Act is a reality that affects every company, regardless of its size. As engineers from 01tech, we emphasize - small and medium-sized enterprises (SMEs) are also subject to these regulations if they implement or provide AI systems within the European Union. Although penalties are moderated based on turnover, for smaller entities they can still mean financial paralysis, which is why professional AI training for business should today include not only efficient prompting but, above all, legal and ethical awareness.

Does the AI Act prohibit using free ChatGPT at work?

No, the AI Act does not introduce a total ban on using tools like free ChatGPT, but it imposes an obligation on companies to conduct a reliable risk assessment and ensure transparency. The main problem in this case is so-called AI security in a company, as public versions of bots by default use entered data to train their models. If an employee enters a financial report or customer personal data into the chat window without the board's knowledge, the company risks breaking personal data protection and trade secret regulations. That is why reliable ChatGPT training for business places such great emphasis on switching to closed models or using secure API connections.

Who bears legal responsibility for errors generated by AI?

According to EU regulations and labor law, responsibility for the final effect of an AI system's operation rests with the deployer (i.e., the employer), not the technology provider. If AI generates an error in code that causes losses or suggests a wrong financial strategy, the company cannot hide behind model hallucination. In the relationship with the employee, human oversight (human-in-the-loop) remains key. When building advanced dedicated applications or systems based on intelligent process automation, we always implement safeguards requiring final approval of results by a human. An employee can only bear disciplinary responsibility if they ignored internal procedures and regulations regarding work with digital tools.

When do AI Act regulations become mandatory for companies?

The AI Act regulations entered into force in August 2024, but their full application is spread over time in the form of transition periods. Prohibitions regarding systems with unacceptable risk (e.g., social scoring or unauthorized emotion recognition) began to apply earliest, after 6 months. Most standard obligations for companies implementing AI training in business will fully enter after 24 months. This vacatio legis is the ideal time for education and creating an AI policy - our comprehensive guide to AI training for companies emphasizes that building competencies is a continuous process. Companies that start auditing their systems and training teams today will not only avoid penalties but also build a culture of innovation based on security and full technological ownership.