SUBJECT: Data Digitization and Resource Consistency Guide

Data Digitization Process Step-by-Step and Resource Consistency Methods

> What is the digitization process and why data consistency determines implementation success

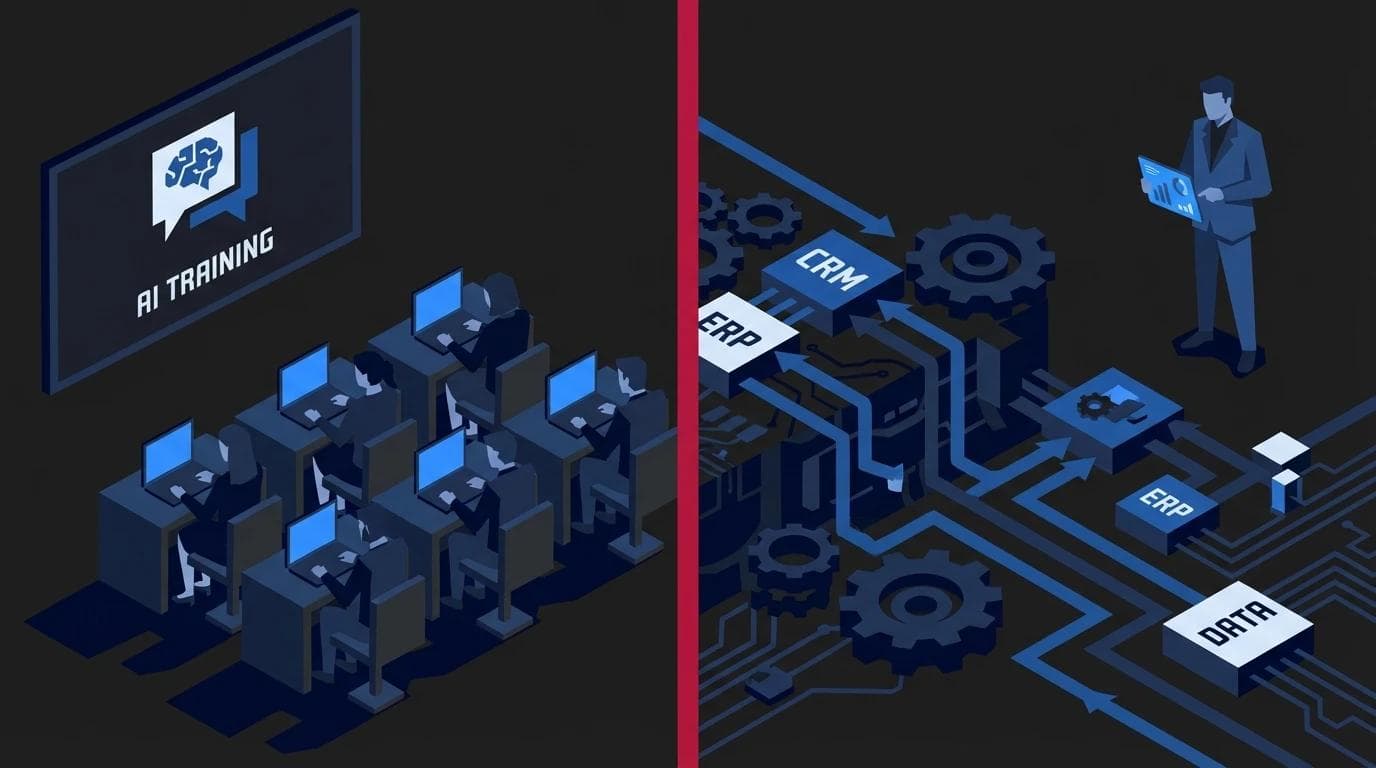

The digitization process is a strategic transformation of analog resources and scattered information into structured digital records that can be automatically processed by IT systems. From a business perspective, it is not just about changing the format from paper to electronic, but about creating a foundation for efficient organizational management. A correctly executed transformation allows for instant access to reliable information, which directly translates into faster decision-making and the elimination of bottlenecks in daily operations.

At 01tech, we say it clearly: the digitization process is not mindlessly retyping pages into a computer. It is the transformation of chaotic information into organized records, which must be preceded by flow analysis. If your company plans to grow, your digitization strategy should emphasize data quality at the source. The IT industry follows the old rule: Garbage in, garbage out (trash in = trash out). This means that even the most expensive software will not fix errors resulting from sloppiness during the information entry stage.

You can spend a million PLN on a new management system, but if you feed it incorrect, outdated, or duplicate data, the system will simply generate incorrect financial reports - only faster. This is why custom applications are so effective, as they allow for the design of strict validation rules that prevent the entry of inconsistent records. Data consistency determines whether the implementation brings a return on investment (ROI) or becomes a costly digital archive of chaos.

When document digitization is implemented, unifying formats and naming conventions becomes crucial. Without this, employees waste time searching for files named differently in every department. Properly planned process automations require the machine to understand every field in the database without room for guesswork. Only clean data allows for building advanced ecosystems where technology truly supports humans rather than generating additional problems.

Key Takeaways - digitization process

- Digitization is not copying - it consists of transforming chaos into organized record structures.

- Garbage in, garbage out principle - errors in input data result in incorrect results in reports and analyses.

- Consistency as a priority - implementation success depends on the quality and uniformity of information across the entire IT ecosystem.

- Foundation for automation - without reliable data, it is impossible to effectively implement AI systems or automatic workflows.

> Stage one: audit and resource cleaning before migration

Audit and resource cleaning are the foundation without which any digitization process is doomed to inefficiency and unnecessary costs. Before we write the first line of code for a new application, we must perform a major cleanup, because migrating chaos 1:1 is simply throwing money down the drain. Identifying errors, duplicates, and outdated information allows you to avoid situations where the new system replicates the errors of the old work model.

In our practice, we often encounter a huge "data debt" that hinders business growth. We had an e-commerce client whose customer base was scattered across three different Excel sheets. The lack of consistency resulted in numerous typos in addresses and mass duplication of companies, which directly translated into logistical errors and costly package returns. Only a thorough document digitization and organizing the records allowed for the safe implementation of new technology.

An effective pre-migration audit should focus on three key areas:

- Verification of record uniqueness - removing duplicates of customers, products, or orders, which prevents analytical paralysis in the new environment.

- Data correctness validation - checking if date formats, tax IDs, or postal codes comply with standardized rules, which greatly facilitates subsequent process automations.

- Elimination of redundant data - removing archival information and unnecessary fields that have no operational value and only burden the infrastructure.

Understanding that clean data is the fuel for technology is a key element described by every mature digitization strategy. If managers neglect database hygiene at this stage, even the most advanced custom applications will not bring the expected return on investment. Instead of real optimization, the company will only receive a digital version of the mess that previously paralyzed work in spreadsheets or traditional archives.

> How to technically plan the data digitization process in a custom system

Technical planning of digitization involves transforming raw records into useful business information through rigorous database architecture design. Unlike off-the-shelf solutions, custom applications allow for the creation of structures that enforce order and logical consistency from day one. The key is to define strict validation rules and precise format mapping, which eliminates the risk of creating a so-called data swamp.

Field mapping and format transformation

Field mapping is the process where we determine how data from existing Excel sheets or paper forms should look in the new system. It often happens that the same information - for example, an order date - is recorded in five different formats, making subsequent analytics impossible. Effective document digitization requires imposing a single, rigid data entry mask at the import stage.

Proper transformation covers several key areas:

- Currency unification - the system automatically converts historical amounts according to a set rate or blocks the ability to enter a currency symbol in a numeric field.

- Contact data formatting - automatic removal of spaces from phone numbers and ensuring the postal code contains the required hyphen.

- Date normalization - converting various records (for example, DD/MM/YYYY to YYYY-MM-DD) to a database standard, allowing for correct sorting and reporting.

Automatic validation as a guardian of information quality

In ready-made, boxed SaaS systems, you must bend your processes to what the software creator envisioned, which often leads to quality compromises. When an individual digitization strategy is implemented, we build hard guardians - validation rules created specifically for your company's specifics. The system will physically not allow an employee to save an order if they do not provide a correct tax ID or omit a key attachment required in a given process.

This approach ensures that process automations work flawlessly because algorithms operate on always correct and complete sets. Instead of fixing errors after the fact, we protect the company from human mistakes at the data entry stage. As a result, business information remains reliable for years, and the database does not degrade as the number of records grows.

> Technological ownership vs. security and control over the digitization process

Full control over the digitization process is only possible when the company owns the source code and system architecture. Choosing the CapEx model instead of subscription-based SaaS allows you to avoid the technological ceiling that appears during dynamic business growth. When key resources are located on an external provider's servers, you lose real influence over how your data is protected and processed. Owning the software guarantees that strategic customer information and unique operational processes do not physically leave your infrastructure.

Keeping key data in a third-party cloud is de facto giving up control over the company's lifeblood. In situations where the business evolves and there is a need to combine sales data with logistics in a unique, proprietary way, users of closed systems often hear that a given module does not support it. This is why custom applications are so important for maintaining flexibility, as they allow for database modeling without the software provider's pre-imposed limitations.

Technological ownership translates into specific benefits in the area of digital structure management

- Data sovereignty - you are certain that sensitive information is stored according to your security standards, not the general terms of a mass provider.

- No technological debt - you can freely modify the code, introducing, for example, process automations that are strictly tailored to your workflow, not an average market norm.

- Integration without barriers - by owning full documentation and code, you can seamlessly connect the system with IoT and hardware solutions, creating a coherent ecosystem.

Even if your first step is basic document digitization, it is worth thinking about the target architecture from the start. A well-planned digitization strategy should take into account that investing in code ownership pays off through higher security and lack of license fees in the future. This also allows for the free implementation of innovations, such as AI training for business, which draw from your unique datasets rather than the competitors' publicly available databases.

> Using artificial intelligence to maintain database consistency

In professional technological implementations, artificial intelligence has ceased to be just a flashy gadget and has become the foundation of data hygiene. The modern digitization process relies on algorithms that act as an invisible back-office worker, ensuring that every piece of information goes to the right place without human intervention.

Instead of relying on manual retyping, companies are increasingly implementing process automations where AI acts as a quality guardian. Algorithms can analyze hundreds of crumpled PDF invoices in a fraction of a second, extract the correct amounts, and automatically fill in the appropriate fields in the system. Such precision is crucial when document digitization in a small company is implemented, where errors at the data entry stage can affect all subsequent financial analytics.

Using artificial intelligence connected directly to the company's structures allows for

- Automatic categorization - the system independently recognizes the document type and assigns it to the correct project or department based on context, not just keywords.

- Anomaly detection - the algorithm acts as an intelligent fuse that can block a process and send an alert if it notices an employee has entered an amount with one extra zero.

- Database cleaning - constant consistency monitoring prevents duplicates and incorrect record formats, which is almost impossible to achieve with manual work.

For these mechanisms to work effectively, custom applications are necessary, allowing for deep integration of machine learning models with existing IT infrastructure. Only by owning the code, as mentioned in the digitization strategy, does an enterprise have full control over how algorithms are trained and what data they actually process.

For teams that want to manage these tools themselves and understand their potential, AI training for business is helpful, teaching how to configure systems to truly support operations. It is worth remembering that in advanced ecosystems, these solutions are often combined with IoT and hardware, where data flowing directly from machines is verified for logical consistency with production reports. If you plan to implement such control systems, please visit the contact section to discuss your database's possibilities.

> Frequently asked questions about the data digitization process in an enterprise

The digitization process raises many questions, especially among managers responsible for operational profitability. Understanding that this is not just a technical operation but a strategic step allows for better resource planning and avoiding costly downtime.

How long does the full data digitization process take?

Thorough cleaning and organizing of data is usually a matter of several weeks. The exact schedule depends primarily on how much mess has accumulated in the company over the years and what form (analog or scattered digital) the information is currently stored in.

In our practice, we advise against digitizing everything at once. It is best to start with key active data, such as current price lists and active customer bases, which allows for rapid implementation of process automations. Old archives can wait their turn, so they do not block the launch of the new operating system. Effective document digitization should be implemented in stages, allowing the company to maintain operational continuity.

What are the biggest risks when moving data to a new system?

The biggest threat is the incorrect interpretation of data fields and the loss of their integrity during import. When systems do not speak the same language, situations can occur where historical sales data is assigned to the wrong categories, permanently distorting business analytics.

Key risks include

- Loss of historical data - lack of proper migration procedures can lead to the irreversible deletion of valuable information.

- Performance issues after import - an unoptimized database structure can drastically slow down software performance, which is why a well-thought-out digitization strategy developed before the import is so important.

- Format incompatibility - errors in data types require tedious manual correction, significantly increasing project costs.

Do I need my own IT team for data digitization?

Digitization is primarily a business project, not an IT one. It requires strong commitment and decision-making from management, as they know best how data should work for them in daily practice and what goals it should achieve.

The technological partner's task is to provide the appropriate tools to secure this process from the technical side. When we design custom applications, we take on the technical and architectural burden, but the definition of business logic always lies with the client. If your company does not have an extensive IT department, the optimal solution is direct contact with the implementation team, who will conduct a resource audit and propose a safe migration path.